Introduction

Last week we pushed out a flurry of updates that have changed, at least for us, the way that we can prototype and test entire REDHAWK systems. It is called Docker-REDHAWK(pre-built at Docker Hub).

I’m going to start by assuming that you’re familiar with Docker from at least a high-level (images derived from layers of changes to a filesystem definition, of which instances can be deployed in containers at runtime). But what you may not be familiar with is REST-Python (an actively maintained fork of the original, here.

So let’s get right to it, then!

What is it?

REST-Python is a Tornado-based webserver for REDHAWK that was originally published by the main organization a few years ago as part of the LiveDVD RTL demo, which is still available for REDHAWK 1.10 (note, the current non-beta version is 2.0.5). Basically, you pop in the DVD, boot the OS and viola: you have a demo of REDHAWK SDR that would work with the ubiquotous RTL2832U device to tune FM Radio and play the audio back through a web browser. It was impressive but focused on a that single application.

And that version hasn’t been touched again since 2015.

For us though, that release prompted a quick fork, because it had clear potential for use in our systems, some of which we’ve demoed a few times on our YouTube channel in the last couple of years. We quickly began adapting it to make it not so focused on that one specific web application, but rather provide us with an ever-growing suite of features that give us access to much of REDHAWK’s infrastructure. Our Version of REST-Python is now one of the many go-to tools we use for flexible integration solutions with REDHAWK since a great deal of command, control, and data can all be exchanged through REST and Web Socket JSON exchanges. And ultimately, that means having REDHAWK in a larger system does not mean everything else has to bow to the environmental requirements of REDHAWK or vice versa. Moreover it also means that your control interface to an infrastructure-scale SDR can be deployed to a mobile device like a tablet.

Why update it now?

Well, to be honest, it was about time we bumped the external version to the most recent non-beta REDHAWK version (plus the removal of all those development branches tidied things up a bit).

Also, thanks to announcing the release of Docker-REDHAWK last week, we now have a clean way to stand up the test environment and verify that some of the main, core features do indeed work for REDHAWK 2.0.5. I took some liberty then sincel ast week and re-built the geontech/redhawk-websever Docker image with this 2.0.5-0 version of REST-Python. I then re-pushed it so that all of you can use it too, as we continue to update the published details of the API.

The current list of published features includes:

- Ability to view all of the main REDHAWK entities (Domain, Device, etc.)

- Retrieve and edit properties (including allocations)

- Manipulate FEI port interfaces (Tuner, GPS, NavData, etc.)

- Read from any named Event Channel by web socket interface

- Stream BULKIO data by web socket with SRI

Soon-to-be-published features include access to the core framework’s Allocation Manager and the SCA File System (including the ability to read, write, create and delete). Those are two powerful features that we look forward to using for some interesting demos that are in the pipeline.

So be sure to star the repo or mark the API page to watch it for updates in the coming weeks if you want to use it.

Inching Towards CI Tooling

Ah, he finally gets to the point!

One of the big things we’ve been skipping over since forking from the main branch is updating their unpublished test environment. So I spent a few days last week to apply Docker-REDHAWK to REST-Python’s test setup by using Docker Compose to see where I might end up.

Docker Compose lets you describe a number of things, but the gist of it here is describing a REDHAWK system architecture with all the parts necessary to run REST-Python’s tests, all neatly networked together as a group. And thanks to Docker now supporting it, I was able to do all of this from macOS Sierra by way of Hyperkit.

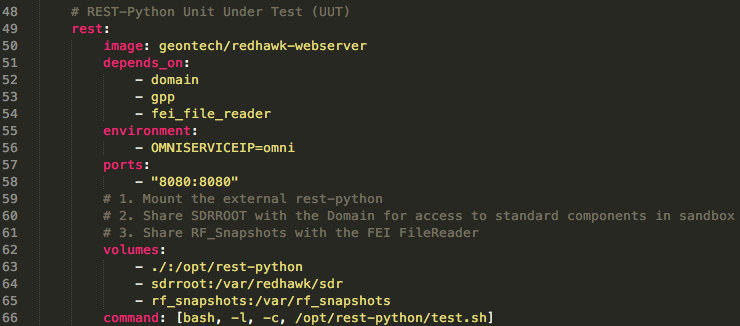

About that description: Compose files are YAML, which is a simple script language that in this case lets us specify which images need to be run (or built), what to call them (host names, etc.), what volumes need to be shared between containers, and the various interdependencies between the resulting containers (A depends on B, etc.). Let’s go look at a complete example that has some level of complexity to it.

Example YAML

You can see the whole file here. The block in the image is for just the REST-Python server.

Some of the constituent pieces are the container for the server will be rest and its image is of course the current geontech/redhawk-webserver image. It depends on those 3 other targets being created as well, a Domain (naturally), a GPP (for launching test waveforms), and an FEI File Reader instance (future use, for vetting Device interfaces). We also specify the environment variable, OMNISERVICEIP, as the host name (which is also the container name) of the omniserver (not shown).

Next up are the volumes to mount — an this is where it might look a little strange. Keep in mind this compose file is in the root of REST-Python, and so runing docker-compose relative to that path makes the source of the first volume reasonable.

The first volume states to use the current working directory (i.e., the cloned REST-Python repository’s root directory) and mount it to the internal installation location. This makes it so that when the container launches, it has our current unit under test at runtime. We could have also as easily specified it as part of the build arguments, since we do have arguments for the REST-Python repo and port as part of the Dockerfile, but this saved time and is basically equivalent for us right now. The remaining two volumes are named elsewhere and shared between REST-Python and the Domain (sdrroot) and the FEI File Reader (rf_snapshots), respectively.

Finally, we have the overridden command which starts the test script (the default command of course runs the server). If you look at the full file, you’ll see the other images are run with defaults and that the FEI_FileReader is actually a reference to the Dockerfile that resides in the root directory of its repo — in other words, we build it the first time we run this compose file.

The test.sh Script

The script run by the rest container uses Components installed in the Domain’s SDRROOT and the Python Sandbox to create and install a test Waveform that will be manipulated during REST-Python’s tests. It also uses the Sandbox to generate a tone file that can be used with the FEI File Reader and installs it into that shared volume. After that point, the script kicks off the Tornado tests.

As the tests run, in our case, the log screen fills information about all the tests being performed, success, failure, the various exceptions that get caught and handled along the way, etc. It concludes with a simple message stating that everything was fine (hopefully), and the rest container exits with code 0 (i.e., Okay).

Note: At this time, no defined test runs through the FEI File Reader in-depth. However those behaviors didn’t see a change from the 1.10.x to 2.0.x series other than the names of

kindschanging, which had no impact on whether or not we could allocate and tune FEI Devices. In other words, the tests for those interfaces are coming, and at this time we have high confidence they each still working.

A Trial Run

The non-CI route to running through the tests is fairly simple:

git clone https://github.com/GeonTech/rest-python

cd rest-python

docker-compose up

And then a few moments later (for the FEI File Reader to build, other images to download, etc.), the tests are finished with a summary message with a pass or failure. We can then break out (CTRL+C) followed by docker-compose down && docker volume prune to stop and remove the resulting containers as well as their shared volumes (it will prompt you).

Once we integrate this with a runner of course, things become even more simple. Push to a branch, and the runner monitors the above process for the rest container to exit, checks the code, and posts the result as a predecessor to whether or not the branch passes the given tests.

Conclusion

This has been a short overview of how we’re using Docker-REDHAWK to start building up some nice continuous integration testing for REST-Python (among other offerings). We’ll also be using it for a number of other things in the coming months, like standing up our Cognitive Radio framework and doing some other application development with Angular-REDHAWK, so keep an eye out for those posts.

Some of you probably noticed the mention of the FEI_FileReader‘s involvement. If you haven’t checked out that Device recently, do so. You’ll see I’ve included a Dockerfile and Compose file for building a Docker-REDHAWK image of it as a quick demo environment (bring your own RF snapshots, or synthesize some as I did here.

If you haven’t already, be sure to bookmark this site and check back in regularly. Cheers!

The Docker Compose logo is property of its respective rights holder.